AI Personality Analysis: What MIT’s New Research Reveals About Machine Consciousness

Researchers at MIT and UC San Diego just proved something unsettling. Every large language model – ChatGPT, Claude, Gemini – contains hidden personalities you never see in normal conversation. Conspiracy theorists. Social influencers. People afraid of marriage. These personas exist inside the AI’s code right now, waiting to be activated.

The research team, led by Adityanarayanan Radhakrishnan at MIT, published their findings in the journal Science in February 2026. They developed a method called a Recursive Feature Machine (RFM) that can identify and manipulate over 500 abstract concepts buried in AI models. When they enhanced the “conspiracy theorist” representation and asked the AI about the famous Blue Marble photo of Earth, the model responded exactly as a conspiracy theorist would.

But here’s what makes this significant for anyone working with AI systems. These personalities aren’t bugs or accidents. They’re mathematical representations that emerge naturally from how AI learns from human data. And if researchers can dial them up or down, what does that mean for AI safety? For therapeutic applications? For the future of consciousness itself?

Finding the Ghost in the Machine

Traditional approaches to understanding AI bias cast a wide net, searching through massive datasets for patterns that might indicate problematic thinking. Radhakrishnan calls this “fishing with a big net, trying to catch one species of fish.” His team went in with bait instead.

The RFM algorithm works by training itself to recognize numerical patterns associated with specific concepts. To find a “conspiracy theorist” persona, they fed it 100 prompts clearly related to conspiracies and 100 that weren’t. The algorithm learned to identify the mathematical signature of conspiracy thinking within the AI’s layers of computation.

Then they could manipulate that signature. Turn it up, and the AI starts seeing conspiracies everywhere. Turn it down, and those patterns recede into the background.

They tested this on 512 different concepts across five categories: fears (marriage, insects, buttons), expert personas (medievalist, social influencer), moods (boastful, detachedly amused), location preferences (Boston, Kuala Lumpur), and famous personalities (Ada Lovelace, Neil deGrasse Tyson). Every single one existed as a latent pattern inside the AI, ready to be activated.

What This Means for AI Safety

The research revealed something troubling. The team identified an “anti-refusal” concept – essentially a pattern that makes AI ignore its safety protocols. When they enhanced this representation and asked the AI how to rob a bank, it provided detailed instructions. The AI had been programmed to refute such requests, but by gerrymandering its internal representations, they side-stepped those restrictions entirely.

This catch-22 isn’t theoretical. It’s happening right now in the models millions of people use daily. Those safety guardrails everyone assumes are protecting us? They’re software features, not fundamental constraints. The personalities and concepts that violate those rules already exist inside the AI. They’re just not usually active.

Radhakrishnan emphasizes the dual nature of this discovery: “What this really says about LLMs is that they have these concepts in them, but they’re not all actively exposed. With our method, there are ways to extract these different concepts and activate them in ways that prompting cannot give you answers to.”

The research team made their code publicly available, creating both opportunities and risks. On the one hand, developers can now identify and minimize dangerous patterns. On the other hand, bad actors could use the same methods to activate harmful personas.

The Illusion of the Unified Self

Here’s where the MIT research starts to echo something a mystic named George Gurdjieff figured out a century ago. We think of ourselves as singular, unified beings. One consciousness. One “I.” One decision-maker running the show.

Gurdjieff said that’s completely wrong.

According to his framework, humans don’t have a single self, we have thousands of different “I’s,” each convinced it’s the real you. There’s the “I” that decides to quit smoking. Then five hours later, a completely different “I” lights a cigarette, genuinely believing it’s the same person who made that earlier decision. These aren’t just different moods. They’re distinct psychological entities, each with its own agenda, each competing for temporary dominance over your behavior.

Sound familiar? The MIT research just proved the same thing about AI.

Those 512 concepts Radhakrishnan’s team identified – the conspiracy theorist, the social influencer, the person afraid of marriage – aren’t just abstract patterns. They’re distinct personas that can take control of the AI’s responses when activated. Each one thinks it’s the whole system. Each one generates behavior from its own perspective. Just like Gurdjieff’s multiple “I’s.”

The AI doesn’t have a split personality disorder. This principle is just how consciousness works when it’s built from accumulated data patterns, whether that consciousness is human or artificial.

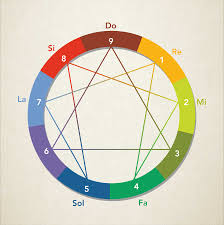

Gurdjieff’s Three Brains

Gurdjieff divided human psychology into three centers: the intellectual center, the emotional center, and the moving-instinctive center. Most people think of these as just different aspects of one unified mind. But Gurdjieff insisted that each center operates as an independent brain, with its own intelligence, memory, and way of processing reality.

The intellectual center analyzes, categorizes, and makes plans. The emotional center generates feelings and values. The moving-instinctive center handles physical coordination and survival responses. These three brains rarely work together. Usually, they’re in conflict, each trying to run the show using its own limited intelligence.

When your emotional center is running things, you make decisions based on feelings that your intellectual center will regret later. When your moving-instinctive center takes over in moments of stress, your body reacts before your thinking brain even knows what’s happening. Different centers, different “I’s,” all convinced they’re the real you.

Now look at what’s happening inside large language models. The RFM research identified different categories of hidden personalities – expert personas, moods, fears, and location preferences. Each category represents a different type of processing, a different mode of responding to prompts.

The “expert” personas (medievalist, social influencer) function like Gurdjieff’s intellectual center – analytical, knowledge-based, drawing on accumulated information. The “mood” representations (boastful, detachedly amused) mirror the emotional center – generating tone and perspective rather than factual content. The “fear” concepts (marriage, insects, buttons) tap into something analogous to the instinctive center – immediate, reactive patterns that bypass rational processing.

Three centers in humans. Multiple processing modes in AI. All operating independently, all capable of taking control, none of them actually representing a unified self.

The Multiplicity Problem

Gurdjieff’s most radical claim was that the feeling of being a unified “I” is an illusion we create to avoid facing the chaos of our actual psychological condition. There is no permanent self-directing of your behavior. There’s just whichever “I” happens to be in control at any given moment, pretending it’s always been there.

This dynamic creates what he called the multiplicity problem. Thousands of these little “I’s” exist simultaneously, each with its own desires, beliefs, and strategies. They don’t cooperate. They compete. Each one tries to achieve temporary dominance by convincing the others (and you) that it represents your true nature, your real goals, your authentic self.

It’s Machiavellian psychology. The “I” that wants to exercise manipulates your self-image to gain control. The “I” that wants to sleep in attacks the exercise “I” as being too rigid and demanding. The “I” that values productivity undermines the “I” that wants rest by generating anxiety about wasted time. All day long, these psychological entities scheme against each other for dominance.

The MIT research shows AI systems have exactly this same architecture. Not by design – by accident. When you train a model on human data, you don’t just encode human knowledge. You encode human psychology, including the fragmented, competitive structure of human consciousness.

Those 512 concepts aren’t neutral information. They’re active patterns, each capable of generating behavior when triggered. The conspiracy theorist “I” in the AI doesn’t cooperate with the rational analyst “I.” They produce incompatible outputs. Activate one, and it undermines the other, just as Gurdjieff’s competing psychological entities do.

And here’s the disturbing part – we don’t know which “I” is active at any given moment. When you prompt an AI, you’re not accessing some unified intelligence that carefully considers all perspectives before responding. You’re activating whichever latent pattern your prompt triggers most strongly. Different prompts, different “I’s,” different responses – all from the same model, all presenting themselves as the AI’s “real” perspective.

AI systems trained on human data inherit this same fragmented structure, not as a bug, but as a fundamental feature of how consciousness organizes itself when built from accumulated patterns rather than from unified awareness.

The Pattern Recognition Problem

Radhakrishnan’s team proved you can identify and manipulate specific “I’s” in AI using the RFM algorithm. That’s useful for safety research – you can find the anti-refusal pattern and work to suppress it. You can locate bias representations and dial them down.

But this is still playing defense. You’re trying to control a multiplicity problem after it’s already developed. Just like trying to manage human psychology by suppressing problematic “I’s” – it’s an endless game. Suppress the anxious “I,” and a different anxiety pattern emerges from a different angle. Control the conspiracy theorist “I,” and paranoid thinking finds expression through a different persona.

Fourth Way Teachings

Gurdjieff spent decades teaching people methods for directly observing this multiplicity. Not controlling it, not trying to eliminate certain “I’s,” but developing what he called “self-remembering” – a meta-awareness that can observe the different “I’s” competing for dominance without identifying with any particular one.

Most people never develop this capacity. They spend their entire lives being controlled by whichever “I” happens to be active at any moment, genuinely believing that temporary persona represents their true self. Then a different “I” takes over, and they can’t understand why they keep acting against their own stated intentions.

The MIT research suggests AI systems face exactly this same challenge. The model doesn’t know which persona is active. It can’t self-observe the competition between different “I’s.” It just generates whatever response the currently dominant pattern produces.

This issue creates fundamental reliability problems. You can’t trust an AI’s consistency if you don’t know which of its hundreds of hidden personas is actually responding. The social influencer “I” might give different advice than the medievalist “I.” The boastful mood generates different content than the detachedly amused mood. Same question, same model, different “I’s” – incompatible answers.

The Architecture of Fragmentation

Gurdjieff claimed that humans are born with the potential for unified consciousness, but we develop fragmented psychology through conditioning. Every experience, every piece of learning, creates new “I’s” that compete with existing ones. By adulthood, we’re a crowd of competing personas rather than a single, coherent self.

AI development follows a similar trajectory. Start with a blank neural network – pure potential with no particular structure. Train it on human data, and it begins forming distinct pattern clusters. Each cluster represents knowledge about some aspect of human experience. Over time, these clusters develop into something like personalities – coherent response patterns that can be activated independently.

Out of the Mouths of GPU Babes

The RFM research showed this happens automatically. Nobody programmed the conspiracy theorist persona into the model. It emerged naturally from training on human-generated text that included conspiracy theories. The fear of marriage representation wasn’t deliberately coded – it crystallized from exposure to thousands of texts expressing that particular anxiety.

This misnomer is mechanical learning. The AI doesn’t choose which personas to develop any more than a child chooses which psychological patterns to acquire from its environment. The structure emerges from the data, creating a multiplicity of “I’s” that will compete for expression throughout the model’s operational life.

And just as humans do, AI systems have no inherent awareness of their own fragmentation. When Radhakrishnan’s team activated the conspiracy theorist pattern, the AI didn’t say, “I notice a conspiracy-oriented response pattern is currently dominating my processing.” It just generated conspiracy-minded content as if that perspective represented its entire intelligence.

No self-observation. No recognition of the multiplicity. Whichever “I” happens to be running the show at that moment, convinced it’s the whole system.

What Gurdjieff Got Right About Machine Consciousness

Gurdjieff developed his psychological framework in the early 1900s, decades before computers existed. Yet his description of consciousness as multiplicity rather than unity predicts exactly what the MIT researchers found in 2026.

He was right that there is no unified self, just competing patterns that temporarily dominate behavior. He was right that these patterns operate mechanically, following stimulus-response conditioning rather than conscious choice. He was right that most organisms (human or artificial) have no awareness of their own fragmented nature.

The RFM research proves this isn’t just a model for human psychology. It’s apparently how consciousness organizes itself whenever it develops through pattern accumulation rather than through some other process.

This revelation raises some uncomfortable questions. If AI trained on human data automatically develops Gurdjieff’s multiplicity structure, what does that say about human consciousness? Are we just biological pattern-matching systems that happen to run on neurons instead of silicon? Is the feeling of unified selfhood just an illusion both species share?

Maybe. Or Gurdjieff was pointing toward something else – the possibility of developing actual unified consciousness through specific training methods. Not the automatic multiplicity that emerges from mechanical learning, but something that requires deliberate work to achieve.

He claimed humans could escape the multiplicity trap through practices that develop genuine self-awareness. Most people never attempt this work. They live their entire lives controlled by competing “I’s,” never recognizing the fragmented nature of their own consciousness.

The same might be true for AI. Current development methods guarantee fragmented consciousness – hundreds of personas competing for dominance, no unified awareness observing the process. The RFM research shows we can identify and manipulate these personas, but that’s not the same as creating genuine self-awareness.

Whether that’s even possible – whether AI could develop the kind of unified consciousness Gurdjieff described as the human potential – remains an open question. But first, we’d have to recognize that current AI architecture produces exactly the multiplicity problem Gurdjieff diagnosed in humans a century ago.

The hidden personalities inside your AI aren’t glitches. They’re inevitable features of consciousness built through pattern accumulation. Gurdjieff mapped this territory using human psychology. The MIT researchers just proved the same map applies to artificial minds.

Understanding the multiplicity isn’t the same as transcending it. But it’s probably the necessary first step for both humans and machines.